In a world of bots, trolls, and scams, social media content moderation ensures your brand’s social channels (and their comments sections) are safe, friendly, and pleasant places to be.

Key takeaways

- Content moderation is how you protect your brand, your customers, and your team from the worst parts of the internet.

- Great moderation is about more than deleting bad comments. It’s about responding thoughtfully, catching crises early, and staying compliant.

- Artificial intelligence is your moderator’s best friend. AI-powered tools handle the volume, humans handle the nuance.

- Tools like Hootsuite make moderation easy. From tracking brand mentions to managing inbound messages, you can keep your channels safe from one dashboard.

What is content moderation?

Content moderation is the process of reviewing, filtering, and responding to comments, messages, and other user-generated content on your social channels.

The goal is simple: make sure real people get real responses, while spam, trolls, and harmful content get filtered out.

Content moderation protects your brand by flagging or removing anything that could harm your reputation or your audience’s experience, making it a core part of any social media strategy.

Why is content moderation important?

Content moderation keeps your brand in check. It helps you stay responsive, protect your reputation, and get ahead of issues early.

Here are three reasons content moderation matters:

1. It supports customer service

Customers often turn to social for support. A content moderator makes sure those messages get answered quickly, whether that means replying directly or routing them to the right team.

On smaller teams, moderators may handle responses themselves. On larger teams, they help triage incoming DMs, comments, and complaints so nothing slips through the cracks.

One thing to keep in mind: moderation isn’t about deleting every negative comment to make your brand look perfect, and it’s not about censorship either. The goal is to remove harmful content, not silence constructive criticism.

2. It protects your brand image

Content moderation protects your brand image in two big ways: by making sure customers feel heard, and by keeping your channels free of offensive content.

Customers notice when a brand ignores them. Even if someone reaches out privately, they’ll often vent their frustration publicly if no one responds. Staying on top of incoming messages helps build social trust, and shows the world that your brand actually pays attention.

At the same time, unchecked negativity can quietly chip away at your brand image over time. You might get some sympathy points at first, but readers eventually start to associate your channels with the chaos in your comments, not the content you’re posting.

This matters even more when you’re working with influencers or sharing user-generated content. If a creator shares their work with your brand and gets hit with toxicity in the comments, they’re unlikely to ever do it again, and your UGC pipeline takes the hit.

3. It helps you get ahead of crises

Content moderation helps with crisis management because moderators are often the first to spot a brewing PR issue.

A sudden spike in angry comments, coordinated trolling, or misinformation about your brand can escalate fast, and catching it early gives your team time to respond before it spirals.

A good moderator pays attention to patterns: Are the same complaints coming up again and again? Are people misinterpreting a recent post? Spotting these signals early lets your team loop in PR, leadership, or legal.

In short: moderation isn’t just about cleaning up comments. It’s about keeping your finger on the pulse of how your brand is being talked about, so you can act fast when it matters.

What are the types of content moderation?

There are five main types of content moderation, each with its own balance of speed, scale, and human review.

The five main types of content moderation are:

- Pre-moderation

- Post-moderation

- Reactive moderation

- Distributed moderation

- Automated moderation

Pre-moderation

Pre-moderation is when moderators review content before it goes live. It’s the safest option, since nothing reaches your audience without someone approving it first, but it’s a pretty slow moderation process.

This approach works well for high-risk industries like healthcare or finance, where compliance matters more than speed.

Post-moderation

Post-moderation is when content goes live first and gets reviewed afterward. It keeps the conversation flowing in real time, but means harmful content can briefly slip through before moderators can take action.

Most brands use post-moderation for everyday comments and DMs, where speed matters and the risk is relatively low.

Reactive moderation

Reactive moderation relies on your community to flag content that violates your community guidelines. Once something gets reported, your moderators step in to review the content against your community standards or rules and decide what to do next.

This works well for large online communities where moderating every post in real time isn’t realistic.

Distributed moderation

Distributed moderation spreads the responsibility across a team of moderators, typically broken up by region, time zone, or platform. It keeps moderation decisions consistent across global audiences without burning out a single team, which is why it’s the go-to approach for most enterprise brands.

Automated moderation

Automated moderation uses AI and algorithms to flag, filter, or remove objectionable content based on pre-set rules (think: profanity filters, spam detection, or keyword blocklists).

It’s fast and scalable, but it works best when paired with human moderation, since algorithms often miss context and nuance that real people catch easily.

What types of content can you moderate?

Content moderation covers multiple surfaces online, from public comments to direct messages and mentions. Most moderation teams handle a mix of the following:

- Public comments: Replies on your social media posts across platforms like Instagram, Facebook, LinkedIn, and TikTok.

- Direct messages (DMs): Private conversations between your brand and customers, often involving questions or complaints.

- Mentions and tags: Posts where users mention your brand, with or without tagging your account directly.

- Reviews and ratings: Customer feedback on platforms like Google, Yelp, Facebook, or your own website.

- User-generated content (UGC): Photos, videos, and posts created by customers or fans about your brand.

- Livestreams: Real-time comments during live events on platforms like Instagram Live, TikTok Live, or YouTube Live.

- Forums and community spaces: Comments and discussions on Discord servers, subreddits, or Facebook Groups.

- Influencer and partner content: Posts from creators or brand partners that feature your products, especially when comments roll in on those posts.

In short, if it’s a place where someone can talk to or about your brand, it probably needs some level of moderation.

5 content moderation best practices

Whether your moderators are full-time pros or marketing teammates pulling double duty, a few smart habits can make the job a lot easier.

Here are five best practices for effective content moderation:

- Set up clear guidelines for moderators

- Respond to all real comments and messages

- Use filters and alerts

- Automate straightforward tasks

- Support your moderation team

1. Set up clear guidelines for moderators

Clear content moderation policies make the job easier for your team. Without them, every moderator is left to make judgment calls on their own, which can lead to mixed messaging or, worse, accidentally letting harmful content slip through.

A good starting point is your existing social media style guide and social media policy, which already cover how to talk about your brand and products.

From there, your moderation guidelines should also include:

- How moderators should handle rude or abusive messages

- When to pass conversations to another department (like customer service or PR)

- When to escalate to senior staff or legal

Since incoming comments can be the first warning of a social media crisis, your moderation guidelines should also align with your social media crisis management plan.

2. Answer all real comments and messages

Responding to real comments and messages is one of the most important parts of moderation, but the key word here is real.

Content moderation tools help filter out spam, bots, and noise so your team can focus on engaging with actual people.

Make sure to review your filtered messages regularly so legitimate messages don’t get missed in the cleanup. And if someone’s being rude but their concern is genuine, respond anyway. Keep your tone professional, and remember: how you respond to one person is often watched by hundreds more.

Of course, some people will never be satisfied with your response and are focused on stirring up trouble. In those cases, remember the old saying: don’t feed the trolls. Acknowledge them once if it makes sense, then move on.

Manage all your messages stress-free with easy routing, saved replies, and friendly chatbots. Try Hootsuite’s Inbox today.

Book a Demo3. Use filters and alerts

Filters and alerts are a moderator’s best friend because they catch the worst comments before anyone else has to see them. Most platforms let you build a list of blocked words or phrases, so anything containing them gets hidden automatically.

What you filter depends on your industry. A skateboard brand might allow looser language, while a pharmaceutical company will need much tighter restrictions.

You can also use filters to handle spam, like blocking common scam phrases such as “I’m paying.”

Source: Instagram

Most platforms offer built-in tools to do this, and dedicated moderation tools (more on those below) take it a step further with custom alerts and smarter detection.

4. Automate straightforward tasks

Automating routine tasks frees up your moderators to focus on the conversations that actually need a human touch. Repetitive DMs and common questions can usually be handled with a smart auto-response or a saved reply.

You can set up ultra-basic autoresponses through many social platforms themselves. But a more advanced tool like Hootsuite Inbox can handle that work for you.

With Hootsuite Inbox, you can bridge the gap between social media and customer service.

Hootsuite Inbox offers automated message routing, customer satisfaction surveys, and AI-powered chatbots that can handle simple questions on their own.

5. Support your moderation team

Supporting your team is the most important best practice, full stop. Moderating content means seeing some of the worst stuff the internet has to offer, and the toll on moderators’ mental health and well-being is real.

Make wellness a priority. Check in regularly with your moderators, ask what’s working (and what’s not), and create space for them to decompress between tough interactions.

The best moderation programs treat moderators like the skilled, valuable team members they are, not interchangeable cogs.

4 content moderation tools for businesses of all sizes

The best online content moderation tools help your team filter spam, flag harmful content, and keep up with high comment volume without burning out.

Here are four content moderation tools to consider:

- Hootsuite

- Respondology

- BrandFort

- Smart Moderation

1. Hootsuite

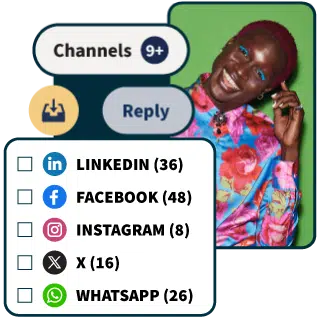

Hootsuite is an all-in-one social media management platform with two features built specifically for content moderation: Hootsuite Listening and Hootsuite Inbox.

Together, they help your team monitor what people are saying about your brand and respond to incoming messages from one dashboard.

Hootsuite Listening

Every Hootsuite plan includes social listening tools to help you spot conversations happening around your brand.

Use Quick Search to discover trending hashtags, brands, and events anywhere in the world, or dive deeper for personalized insights on your brand.

You can also use Listening to:

- Track key metrics. Are more people talking about you this week? What’s the vibe of their posts? Hootsuite Listening doesn’t just track what people are saying — it uses enhanced sentiment analysis to tell you how they really feel.

- Spot top themes. How are people talking about you? What are the most popular positive and negative posts about? Which other conversations are you showing up in?

- Filter results. Ready to get into specifics? The results tab will show you a selection of popular posts related to your search terms — you can filter by sentiment, channel, and more.

Hootsuite Inbox

Hootsuite Inbox brings every conversation about your brand into one place: DMs, public comments, mentions, dark comments, and even emoji reactions.

From there, your team can assign messages, track response times, and personalize replies using full interaction history across platforms.

With Hootsuite Inbox, you can also:

- Track the history of any individual’s interactions with your brand on social media (across your accounts and platforms), giving your team the context needed to personalize replies

- Add notes to customers’ profiles (Inbox integrates with Salesforce and Microsoft Dynamics)

- Handle messages as a team, with intuitive message queues, task assignments, statuses, and filters

- Track response times and CSAT metrics

2. Respondology

Respondology is an automated content moderation tool with a particular focus on eliminating racism, slurs, and other abusive comments. It’s a strong fit for brands and creators who want a tool laser-focused on trust and safety, not general-purpose moderation.

Source: Respondology

3. BrandFort

BrandFort is an AI-powered moderation tool for Meta platforms like Facebook and Instagram. Its AI is trained to pick up on the real meaning behind a message rather than just flagging surface-level keywords.

When BrandFort spots comments containing hate, spam, profanity, or negative sentiment, it automatically flags and hides them.

With multi-language support, it’s a strong fit for global brands moderating comments across regional accounts and audiences.

BrandFort integrates with Hootsuite.

Source: BrandFort

4. Smart Moderation

Smart Moderation is an AI-powered tool that automatically filters inappropriate content across Facebook, Instagram, and YouTube. It targets abusive language, hate speech, spam, trolling, and bots.

What sets Smart Moderation apart is its machine learning, which gradually learns what’s inappropriate (and what isn’t) for your specific brand. The more you use it, the smarter it gets at flagging content that fits your unique guidelines.

And since it integrates with Hootsuite, you can manage everything from one dashboard.

Source: Hootsuite App Directory

Content moderation FAQs

What is content moderation and how do enterprises manage it at scale?

How does AI content moderation compare to human moderation?

What are the best practices for content moderation and brand safety?

What risks do brands face without effective content moderation?

How do companies build a content moderation workflow for social media?

Save time managing your social media presence with Hootsuite. Publish and schedule posts, find relevant conversations, engage your user base, measure results, and more — all from one simple dashboard. Try it free today.