Content moderation is a critical part of any brand’s social media strategy. In a world of bots, trolls, and scams, social media moderation ensures your brand’s social channels – and their comments sections – are safe, friendly, and pleasant places to be.

What is content moderation?

Content moderation is a part of social media management in which a content moderator handles incoming messages, comments, and other content generated by third parties.

Those third parties might be your followers, customers, or random strangers on the internet. The content moderator’s role is to make sure that real people get a real response, while trolls and bots get sorted into the virtual dustbin.

Content moderators also screen comments and other public-facing content for profanity, obscenity, slurs, and other offensive or material.

Why is content moderation important?

Content moderation is important for a couple of main reasons.

1. Customer service

Customers and potential customers may use your social channels to reach out for help. A content moderator makes sure incoming queries are answered appropriately.

On small teams, the content moderator might be responsible for answering questions directly. On larger teams, they might have help in the form of other marketing members or members of the customer service team.

Source: Hootsuite Social Trends 2023

Either way, the content moderator makes sure incoming DMs and public queries or complaints are handled. That might mean addressing messages themselves or assigning them to someone else.

It’s important to note that the content moderation process is not about trying to make it look like you only get positive comments. Instead, it’s about removing content that violates reasonable standards of decent behavior. Negative comments that are civil should be responded to and addressed, rather than removed.

2. Brand image

Social media content moderation affects brand image in two ways. First, it’s very clear on social media when a brand ignores its customers. Even if someone initially reaches out via DM or other private channels, they’ll soon take their issues public if they don’t get a response.

Staying on top of incoming content is a good way to build social trust.

Unfortunately, there are some nasty people on the internet, and they’re going to post nasty comments on your social accounts. Leaving those unattended can also harm your brand image, as no one wants to see offensive content in the comments of a social post.

You might initially get some sympathy from fans, but if you routinely leave ugly comments unchecked, your brand image will begin to suffer. Since social is an important tool for brand and purchase research, this can have a direct impact on your sales.

This is particularly important if you work with influencers or publish user-generated content.

Why is content moderation important for user-generated campaigns? Because when you’re sharing someone else’s content, it’s even more important to keep the comments civil. Someone who shares their content with your brand once is not likely to do so again if they have to read vitriol, slurs, or profanity in response.

5 content moderation best practices

1. Set up clear guidelines for moderators

Social media moderation is a tough job. A clear set of rules makes the work less stressful for moderators and potentially more effective for the brand.

Just like your social media style guide and social media policy, social media content moderation guidelines outline how to refer to your brand and your products on social channels. In fact, these two documents can be a great starting point when creating your content moderation guidelines.

Your content moderation guidelines also need to explain:

- how moderators should deal with rude and abusive messages and comments

- when to pass messages on to another department

- and when to get more senior staff involved

Incoming comments can also be your first warning of a social media crisis. So make sure your social media moderation guidelines align with and refer to your social media crisis management plan.

2. Answer all real comments and messages

We’ve already talked about why it’s important to answer comments and messages. Here, let’s focus on the “real” part of this best practice.

Content moderation tools help filter out spam comments and messages so you can focus your energy on responding to real people.

It’s important to review your filtered messages regularly to ensure no real messages get missed. Even if people are rude or inappropriate, it’s a best practice to respond. Just make sure you keep things business-like and never stoop to their level.

Of course, some people will never be satisfied with your response and are focused on stirring up trouble. In this case, remember the old saying, “Don’t feed the trolls.” Acknowledge that you have engaged with them to the extent you are able and that you cannot help them any further.

Manage all your messages stress-free with easy routing, saved replies, and friendly chatbots. Try Hootsuite’s Inbox today.

Book a Demo3. Use filters and alerts

There are some words and phrases you know for sure you never want to see in the public comments on your social posts. What those words and phrases are will vary. For example, a skateboard brand might have a broader range of acceptable vocabulary than a pharmaceutical firm.

Fortunately, the social networks offer built-in tools to filter out comments based on your pre-selected list of no-go words.

You can also use these tools to help you manage spam comments. For example, you could block comments that contain the phrase “I’m paying”:

Source: Instagram

Other platforms offer similar tools. Or you can set up these filters through your dedicated content moderation tool (more on those below).

4. Automate straightforward tasks

Many of the messages that come in, especially to your DMs, will be questions you get over and over again. Fortunately, some of these inquiries can be managed through automated responses.

You can set up ultra-basic autoresponses through the social platforms themselves.

But a more advanced tool like Hootsuite Inbox can handle that work for you.

With Hootsuite Inbox, you can bridge the gap between social media engagement and customer service.

Handy automations, like automated message routing, auto-responses and saved replies, automatically triggered customer satisfaction surveys, and AI-powered chatbot features, make content moderation a LOT easier.

5. Support your team

Content moderators provide immense value to your organization. It’s important to recognize that they do a difficult job and offer them appropriate support.

Content moderators are on the front lines of the sometimes dark world of online comments. They can see some challenging things and have to deal with even more challenging people. Recognize that your content moderators are not robots. They need time and support to decompress from those difficult interactions.

Make workplace wellness a priority. Check in regularly to see how your moderators are doing, and solicit their input on any ways in which you can make their work less stressful.

4 content moderation tools for businesses of all sizes

1. Hootsuite

Hootsuite can help with your content moderation in a couple of key ways.

Hootsuite Listening

Every Hootsuite plan includes everything you need to get started with social listening.

Use Quick Search to discover trending hashtags, brands and events anywhere in the world, or dive deeper for personalized insights on your brand.

You can track what people are saying about you, your top competitors, your products — up to two keywords tracking anything at all over the last 7 days.

Plus, you can use Quick Search to analyze things like:

- Key metrics. Are more people talking about you this week? What’s the vibe of their posts? Hootsuite Listening doesn’t just track what people are saying — it uses enhanced sentiment analysis to tell you how they really feel.

- Top themes. How are people talking about you? What are the most popular positive and negative posts about? Which other conversations are you showing up in?

- Results. Ready to get into specifics? The results tab will show you a selection of popular posts related to your search terms — you can filter by sentiment, channel, and more.

Hootsuite Inbox

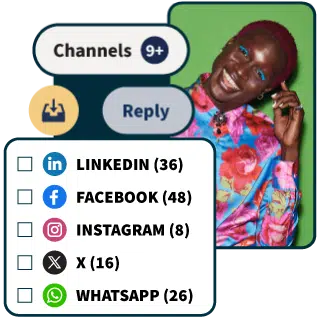

With Hootsuite Inbox, you can manage all of your social media messages in one place. This includes:

- Private messages and DMs

- Public messages and posts on your profiles

- Dark and organic comments

- Mentions

- Emoji reactions

… and more.

The all-in-one agent workspace makes it easy to

- Track the history of any individual’s interactions with your brand on social media (across your accounts and platforms), giving your team the context needed to personalize replies

- Add notes to customers’ profiles (Inbox integrates with Salesforce and Microsoft Dynamics)

- Handle messages as a team, with intuitive message queues, task assignments, statuses, and filters

- Track response times and CSAT metrics

2. Respondology

Source: Respondology

Repondology is an automated content moderation tool with a particular focus on eliminating racism, slurs, and other abusive comments. It also helps filter out spam and bots, along with inappropriate comments that are potentially damaging to your brand.

3. BrandFort

Source: BrandFort

BrandFort uses artificial intelligence to filter out hate, spam, profanity, and overtly negative comments on Facebook and Instagram. It offers support for multiple languages.

4. Smart Moderation

Source: Hootsuite App Directory

Smart Moderation is another automation tool that moderates comments on Facebook, Instagram and YouTube. It’s designed to filter out inappropriate content including abusive language, hate speech, spam, trolling, and bots.

Content moderation FAQs

What does a content moderator do?

Content moderators deal with all incoming comments and messages on social media platforms, both public and private.

What are the types of content moderation?

The main types of content moderation are:

- Pre-moderation. This content moderation process is used in blogs or online communities rather than in the more narrow content moderation definition that applies to social media. This is when all comments go into a queue and have to be approved before they are posted.

- Post-moderation. This is the most common kind of content moderation in social media, where your content moderator reviews all comments after they are posted.

- Automated moderation. This type of content moderation refers to blocking a list of specific words or phrases through a more sophisticated content moderation tool.

What skills do you need to be a content moderator?

First off, content moderators require the ability to deal with challenging work. It can be a fun job because it involves interacting with fans and followers of your brand’s social channels. But it also involves dealing with spammers, scammers, angry customers, and other difficult tasks.

Content moderation teams also need to understand how to use social tools effectively. This includes both the social platforms themselves as well as any content moderation tools in use.

Finally, content moderators require good writing and editing skills, so they are able to represent the brand well through their responses to comments and messages on social channels.

Save time managing your social media presence with Hootsuite. Publish and schedule posts, find relevant conversions, engage your audience, measure results, and more — all from one simple dashboard. Try it free today.